ARTIFICIAL INTELLIGENCE

The Framework Behind AI That

Actually Works in Production

QUALITARA

02.25.2026

CORPORATE

Plan, Build, Run: A lifecycle framework for AI that delivers

AI is no longer experimental. Companies across industries are treating it as core infrastructure, something that changes how they build and operate software. But knowing that AI matters and knowing how to deploy it well are very different things. Most companies that try end up spending too much, shipping systems that break in unpredictable ways, and accumulating technical debt that gets harder to unwind over time.

There is another pressure building in the background. Right now, AI providers are selling intelligence at subsidized prices. That will not last. Last year we wrote about how investing in structured data today could protect against rising costs tomorrow. But we left an open question: what does structured actually mean when you are weaving AI into the way a company operates?

This is our answer. It comes from what we have built internally, what went wrong along the way, and the framework we now use to govern every AI system we ship. We call it Plan, Build, Run.

The Gap Between Knowing and Doing

Most engineering leaders already know that throwing unstructured data at a model is a bad idea. The brute-force approach gets more expensive and more fragile every quarter, and the risk goes up whenever token prices do. The problem is that knowing this does not tell you what to build instead, or how to build it.

The numbers are sobering. S&P Global found that 42% of companies walked away from most of their AI initiatives in 2025, more than double the year before. RAND estimates the overall failure rate for AI projects at above 80%. According to IDC, out of every 33 AI pilots that get launched, only 4 make it to production. When you look at why, the same causes keep showing up: the data infrastructure was not ready, nobody owned the outcome, there were no real success criteria, and there was no discipline around the lifecycle of the system.

We have come to believe the bottleneck was never the models. It was always process.

Plan: Deciding What AI Should and Should Not Do

At Qualitara, AI is part of how we work at every stage. It is becoming the foundation of our internal tools. But the reason it works for us is not the technology. It is that nothing gets built unless we have thought through clarity, governance, and accountability first.

Planning is where we audit the systems, data, and workflows involved. We define requirements, settle on architecture, set guardrails, and agree on what success looks like. All of that happens before anyone writes a line of code.

This kind of rigor is not specific to AI. Any build fails without proper architecture. But AI adds a trap that traditional software does not: a project can meet every stated requirement and still be a failure because it was never grounded in how the business actually operates. Planning is how you catch that before it becomes expensive.

Two projects taught us this firsthand.

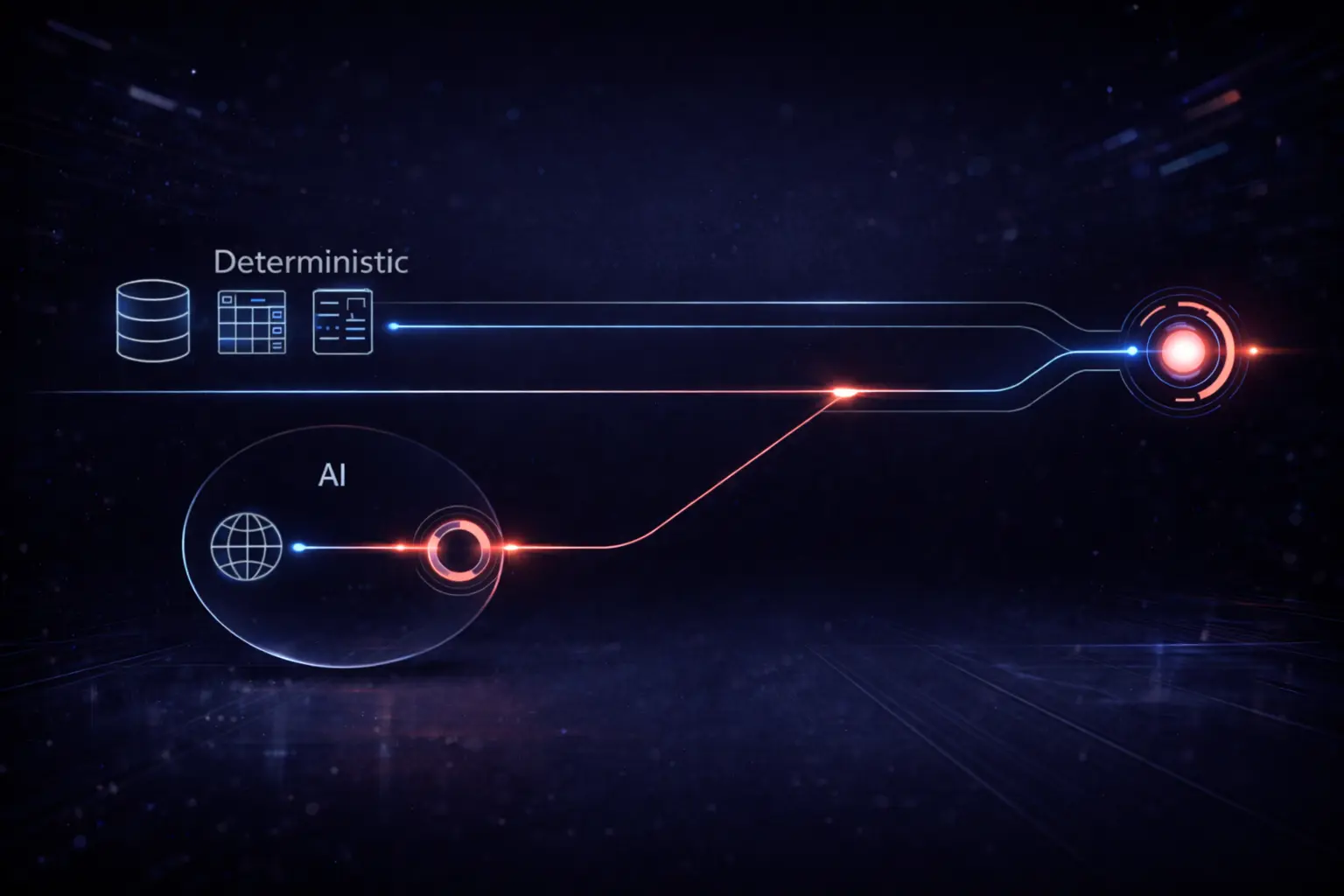

The first was a deal intelligence system. We skipped planning entirely on the first attempt. Raw CRM data went into a model, and we asked it to produce a summary. What came back was full of hallucinated contacts, mixed-up records, and analysis so generic it was useless. The API costs were enormous relative to the value it produced. It failed on every front. When we went back and defined clearly what the AI should handle and what it should not, the right architecture became obvious. About 70% of the work could be handled by deterministic matching, straightforward logic that does not need a model at all. AI only needed to step in for the remaining 30%, the cases where the data was genuinely ambiguous.

The second was a lead generation and network intelligence system. This time we planned the entire pipeline before touching an API. We mapped out what data to pull, what to filter, where rules alone could do the job, and where AI was actually necessary. The result was a system that processes hundreds of leads, surfaces qualified opportunities, and runs predictably in production.

What surprised us in both cases was how much of the problem we could solve without AI at all, just by being deliberate about where we applied deterministic logic and where we actually needed reasoning. Costs went down. Accuracy went up.

This is also where the economic future becomes relevant. Companies that do not take the time to define what their AI systems need to do will fall back on brute force by default. That is already expensive. Soon it will be unsustainable.

Build: Designing the Architecture Around Reasoning and Logic

With the plan in place, the architecture needs to reflect the split between what requires reasoning and what can be handled by logic. In practice this means a layered pipeline. Deterministic logic takes care of everything predictable. AI handles what is ambiguous. Each layer feeds into the next.

“IF EVERY INPUT IN YOUR SYSTEM PASSES THROUGH AN AI MODEL, YOU DO NOT HAVE AN AI PROBLEM. YOU HAVE AN ARCHITECTURE PROBLEM.”

Matching the right model to each task is not a nice-to-have. It is a matter of survival. Fast, lightweight models can handle classification and screening. You bring in the powerful models for synthesis and complex reasoning. A frontier model will technically work for simple categorization, but the cost at scale will break you.

One of the most important things to build into the architecture from the start is provenance. You need to be able to see what the system is doing at every step: which data sources it is pulling from, how long each task takes, and how many tokens each step consumes. Without that visibility, you cannot even ask the basic question of whether a given step is worth what it costs. In our case, this takes the form of structured logging and a lightweight interface that shows token usage and execution time per task. When you can see every step clearly, you start finding places to simplify. You cut steps that are not pulling their weight. You reduce API spend not just by improving accuracy but by eliminating waste.

Once a system is in production, edge cases stop being the exception and become the norm. Both of our internal systems went through multiple rounds of iteration to handle them properly. That was not cosmetic work. It was structural.

Knowing when not to use AI matters just as much as knowing when to use it. Often the most valuable architectural decision is recognizing that a simple rule outperforms a model for a given task. We also use AI to sharpen the engineering process itself, things like requirements analysis, validation, and stress testing. AI does not replace rigor. It makes rigor go further. But only when there is a structured process holding it together.

Run: Earning Trust Through Discipline

AI fails quietly. When a deterministic system breaks, it throws an error. When an AI system gets something wrong, the output still looks reasonable. If no one is paying attention, bad results pile up in silence until the whole system loses credibility.

Three practices separate systems that last from systems that get pulled.

Feedback loops over retraining. When the deal intelligence system first went live, the output quality was mediocre. Rather than retraining the model, we built a feedback mechanism directly into the workflow. Every correction gets stored and fed back into subsequent prompts as additional context. There is no fine-tuning pipeline. Just structured iteration. By around the twentieth run, the system was outperforming manual analysis because it could consistently process more context than any person could. AI systems are not inherently good or bad. They are exactly as good as the cycles of refinement around them.

Graceful degradation. Every production AI system will produce wrong answers sometimes. The system has to be designed with that assumption baked in. When confidence is low, results get flagged for a human to review. Timeouts get logged and the pipeline keeps moving. Partial data does not cause a crash. Flashy output might get attention, but reliability is what earns trust over time.

Cost governance. If you are not tracking token usage, model distribution, and cost per query while the system is running, you are flying blind on what it actually costs. This is the point where financial discipline stops being theoretical.

There is also a longer-term benefit that only reveals itself through sustained operation. As you watch the system work step by step, with the kind of provenance described above, you begin to notice where cleaning your data and standardizing your processes could take AI out of the equation altogether. Tasks that seemed to require a model turn out to be perfectly solvable with deterministic logic once the underlying data is in better shape. The goal is to keep AI where it genuinely adds value, in synthesis and in the hard computational work that nothing else can do, and push everything else into zero-cost deterministic layers. Getting there takes time. But the only path is through the observability and iteration that the Run phase demands.

Why the Framework Matters

Plan, Build, Run is not a slogan. It is a financial hedge. Every decision you make during Plan about how to partition workloads, every choice you make during Build about which model goes where, and every metric you track during Run that tells you what a query actually costs, those decisions add up. They compound into the kind of resilience that holds when the market shifts underneath you.

The discipline stays the same regardless of the client, the solution, or the size of the project.

The companies that come out ahead with AI will not be the ones with the most impressive demos or the most advanced models. They will be the ones that brought discipline to the process. The ones who figured out where AI belongs and, just as importantly, where it does not.

AI without discipline is a liability. AI with lifecycle governance is a competitive advantage.